- Innovation

By Greg Birzes, Chief Technology Officer, Pearson Professional Assessments

While the growing use of online exams has broadened access to some credentials, it has also introduced new challenges to maintaining test integrity. As traditional methods of cheating evolve into more sophisticated, technology-driven approaches, AI is often framed as part of the problem but when designed and deployed responsibly, AI can be a powerful part of the solution. However, it’s important to acknowledge that online proctoring isn’t the right fit for every use case.

Greg Birzes

Chief Technology Officer, Pearson Professional Assessments

Following are five ways AI is increasing the security, reliability, and fairness of online testing:

AI in online testing isn’t one tool—it’s layered systems of intelligent checks.

Online proctoring (in online testing) uses multiple AI sensors working together (not just face ID) across the physical and digital testing environment.

AI doesn’t replace human judgment—it strengthens it.

AI flags anomalies, but humans (proctors) make the final decisions to maintain fairness and accountability.

The biggest advancement isn’t the AI sensors—it’s how their data is interpreted.

AI analyzes suspicious patterns of behavior over time, rather than treating every single alert as an isolated incident.

Stronger security doesn’t mean more stress for candidates—it can enhance the value of their credential while improving their experience.

Automation can speed up candidate check-in and streamline their overall testing experience.

Online proctoring requires continuous investment to stay ahead of evolving threats.

Maintaining test security is an ongoing process that needs continuous tuning, evaluation, and human oversight alongside AI.

1. AI in online proctoring isn’t one tool—it’s layered systems of intelligent checks.

A common misconception is that AI-powered online proctoring is simply facial recognition to verify a candidate’s identity. In reality, facial recognition is just one component of a much more sophisticated system: a complex suite of technologies working in concert to increase security.

The truth is that a single data point is never enough. That's why effective online proctoring relies on layered systems of AI-augmented sensors, each analyzing different elements—from candidate identity and eligibility—to the security of their physical and digital testing environment.

This wasn't always the case. The earliest versions of AI-assisted online proctoring across the industry were limited and, in some cases, prone to false positives. The solution was to move from a system of isolation to one of integration. Today's next-generation sensors are the result of that effort. They’re more accurate, more focused, and built to work together.

This layered model is designed to see the bigger picture. It intelligently combines multiple signals to confirm a security threat, effectively cutting through the noise and making sure security decisions are informed by a broader, more reliable set of intelligent signals instead of a single data point.

2. AI doesn’t replace human judgment—it strengthens it.

A persistent myth is that AI decides whether someone has cheated on an exam.

Test-takers often share stories of being “flagged by AI,” which gets interpreted as an automatic cheating verdict. That’s not how we approach online proctoring. At Pearson Professional Assessments, humans remain at the center of all critical decisions related to exam security. AI is used to assist, not replace, trained assessments professionals (proctors) who are responsible for live exam oversight. Its role is to monitor multiple signals simultaneously and surface potential anomalies or behaviors such as unusual eye movement or background noise.

Think of AI as a second pair of eyes—helping the proctor see more, more consistently, across many test sessions at once. When AI raises security concerns in the remote testing process, interventions remain human-led—essential for fairness, accountability, and confidence in high-stakes assessments.

Our vigilance doesn’t end when the exam does. We continue searching for evidence of misconduct long after the final response is logged. This is because details often emerge days or weeks later as part of a wider investigation.

3. The biggest advancement isn’t the AI sensors—it’s how their data is interpreted.

Adding more AI sensors doesn’t automatically improve security. What matters is how information from those sensors is interpreted and used. For example, a candidate looking away once, stretching, or making a brief noise in their testing environment typically generate low severity flags, if any. Issues arise only when repeated or combined behaviors suggest potential rule violations.

Isolated events can sometimes be misleading. Patterns over time are much more meaningful. By analyzing signals collectively, AI helps distinguish between a harmless stretch and genuinely suspicious activity—reducing unnecessary distractions while strengthening oversight.

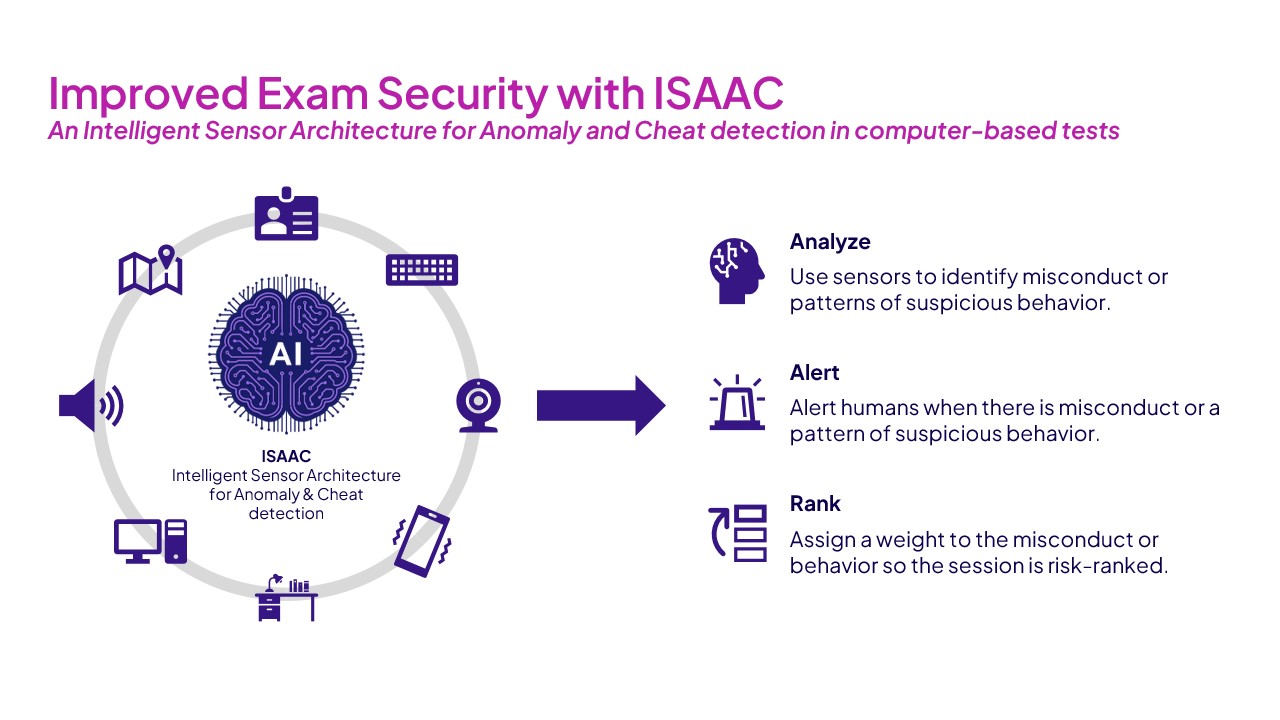

With that principle in mind, we engineered our Intelligent Sensor Architecture for Anomaly and Cheat Detection: an advanced intelligence engine that synthesizes vast amounts of information throughout the online testing process. It doesn't just see individual alerts; it connects the dots between them, analyzing patterns across every signal to distinguish a genuine threat from a false alarm. Instead of presenting our proctors with a flat list of every potential flag, it triages the data, guiding their attention to the most critical areas of risk so they can apply human oversight with maximum impact.

4. Stronger security doesn’t mean more stress for candidates—it enhances the value of their credential and can improve their testing experience.

With high-stakes exams, security and convenience are often misrepresented as opposing forces, but they don’t have to be.

AI has streamlined much of the online exam check-in process. When candidates pass a range of initial automated checks, they can progress more quickly into their exam session—going right into the proctor queue.

This reduces stress and allows candidates to focus on what matters most: their performance in their exam. At the same time, human proctors remain available whenever additional scrutiny is required.

Well-designed security measures can both contribute to exam integrity and support a better candidate experience which is why developments with AI have been so transformative in this area. And more secure exams are more effective at preventing misconduct. This gives credentials greater value.

5. Online proctoring requires continuous investment to stay ahead of evolving threats.

Perhaps the most important thing to understand about AI and online proctoring is that it isn’t a one-time implementation. As a test delivery model, online proctoring requires ongoing investment and continual fine-tuning of its capabilities to maintain accuracy and fairness. A key best practice is ongoing, real-time evaluation of security systems overseen by a dedicated human team analyzing the system's performance, working alongside AI.

AI can be a valuable tool for creating fair and reliable online assessments, but only when designed responsibly and supported by human oversight. This approach is key to building a trusted, controlled environment that protects the value of a credential and gives every candidate the opportunity to succeed.

Keeping the human in the loop

The goal of AI in online testing is about increasing the fairness and integrity for every test-taker.

This isn’t achieved with a single, all-seeing algorithm, but with a layered system of smart AI sensors that assist, rather than replace, human judgment. This human-centered approach serves to strengthen security while supporting a more streamlined and less stressful testing experience for the candidate.

Improved Exam Security with ISAAC

An Intelligent Sensor Architecture for Anomaly and Cheat detection in computer‑based tests

ISAAC

Intelligent Sensor Architecture for Anomaly & Cheat detection

Analyze

Use sensors to identify misconduct or patterns of suspicious behavior.

Alert

Alert humans when there is misconduct or a pattern of suspicious behavior.

Rank

Assign a weight to the misconduct or behavior so the session is risk‑ranked.